Amazon's facial-recognition system can now detect fear

What's the story

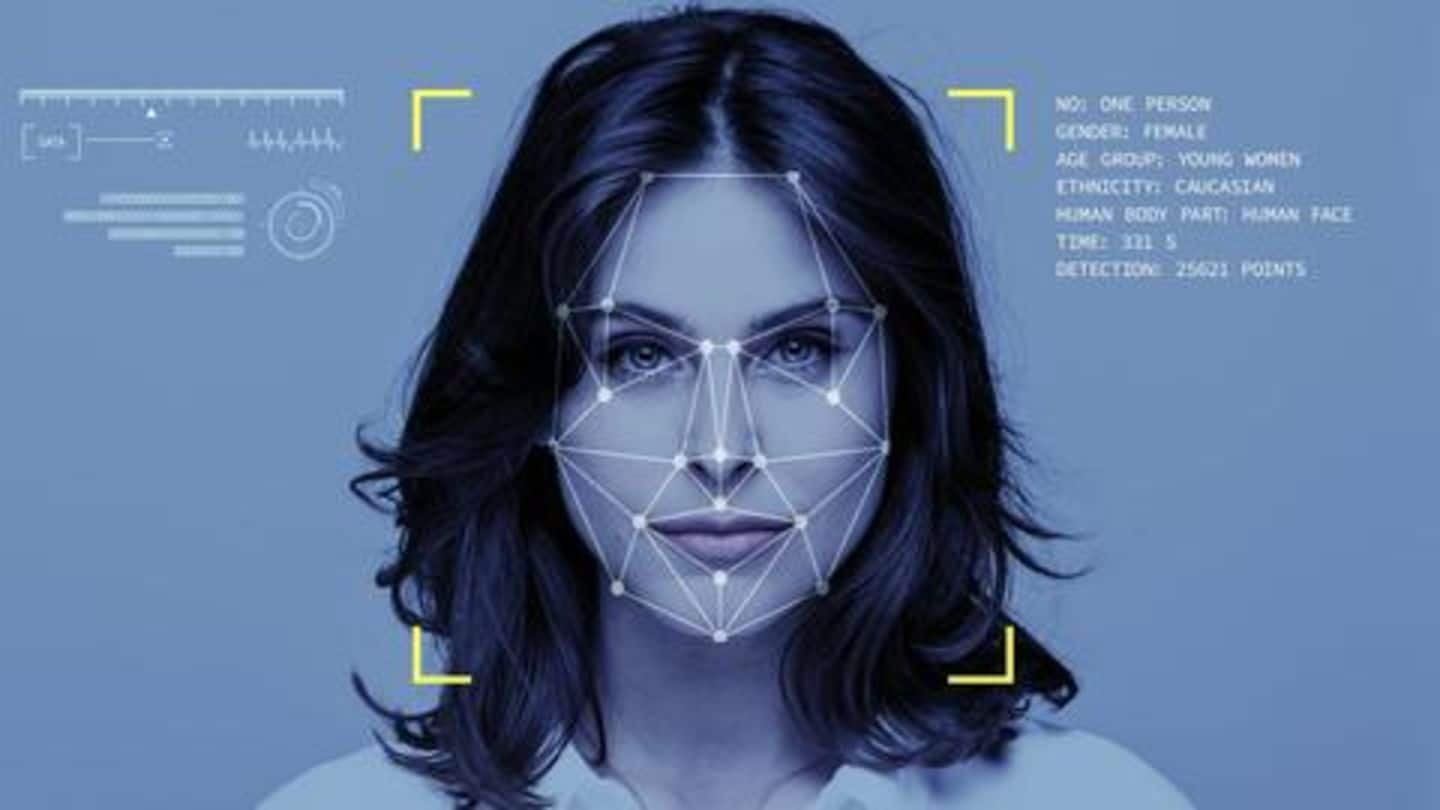

Just as concerns over data security continue to increase, a new report has revealed that Amazon's facial-recognition system, 'Rekognition' has become sophisticated enough to detect signs of fear on your face. The technology, which was previously involved in a scary case of racial bias, has been apparently evolved by Amazon Web Services to spot several facial emotions and features. Here's all about it.

Details

Rekognition can detect eight emotions, even age

In a recent blog post, Amazon announced plenty of updates for Rekognition. It said the system can now use the facial scan of an individual to detect eight emotions - happy, sad, angry, surprised, disgusted, calm, confused, or frightened - on their face. Plus, it added, the tech's ability to estimate the age and gender of a person has also been improved.

Working

Meaning, it can provide narrower age group range

The upgrade means Rekognition can now estimate your age by doing nothing but looking at your face. Previously, it provided a wider range of age groups after scanning a face, but now, the range has been narrowed down, with the results being more accurate. Now, that sounds scary, especially considering the potential of misuse if it goes into the wrong hands.

Doubt

However, there's still an element of doubt

While Amazon's ambitious claims are hard to ignore, it is worth noting that there is no direct evidence that these capabilities actually work with a high level of accuracy. To recall, the Rekognition system had previously drawn flak for being racially biased; it made mistakes while identifying people of color, which, many argued, could pose a threat to marginalized groups.

Do you know?

Rekognition identified Congress members as criminals

Further, Rekognition had also misidentified as many as 28 members of Congress as criminals in one of the many tests conducted to define its capabilities. Another report from a few days ago revealed that it falsely matched a lawmaker's photo to a mugshot.

Application

Several US departments planning to use Rekognition

The development of Rekognition, despite its previous issues and shortcomings, raises concerns of potential misuse in different situations. The tech is already being used by different police departments/law enforcement agencies, with Gizmodo noting that the company has no way to ensure it's being used properly. Some reports indicate the US Immigration and Customs Enforcement could also be using the system, which is even scarier.

Twitter Post

Here's what computing scientist Corey Quinn said on the move

I mean, if they had come to me and asked "Hey Corey, what's the dumbest possible move we could make in this moment" I don't think I'd have come up with something this horrible.

— Corey Quinn (@QuinnyPig) August 12, 2019