Meta unveils custom AI chips amid data center expansion

What's the story

Meta has unveiled four custom-designed chips, specifically tailored for artificial intelligence (AI) tasks. The move is part of the company's major data center expansion plans. The new chips are part of the Meta Training and Inference Accelerator (MTIA) family, first introduced in 2023 and later updated with a second-generation version in 2024.

Performance boost

In-house chips provide Meta with a competitive edge

Meta VP of Engineering, Yee Jiun Song, told CNBC that the company's custom chips, manufactured by Taiwan Semiconductor, offer better price-to-performance across its data center fleet. This strategy gives Meta more diversity in silicon supply and some insulation from price changes. "This is a little bit more leverage," Song said while explaining the benefits of their custom chip design.

Chip deployment

MTIA 300 chip in use for training smaller AI models

The first of the new chips, MTIA 300, was deployed a few weeks ago. It is designed to train smaller AI models that power Meta's core ranking and recommendation tasks. These tasks include showing relevant content and online ads on platforms like Facebook and Instagram. The other chips in this series—MTIA 400, MTIA 450, and MTIA 500—are meant for advanced generative AI inference tasks such as creating images or videos from text prompts.

Upcoming launch

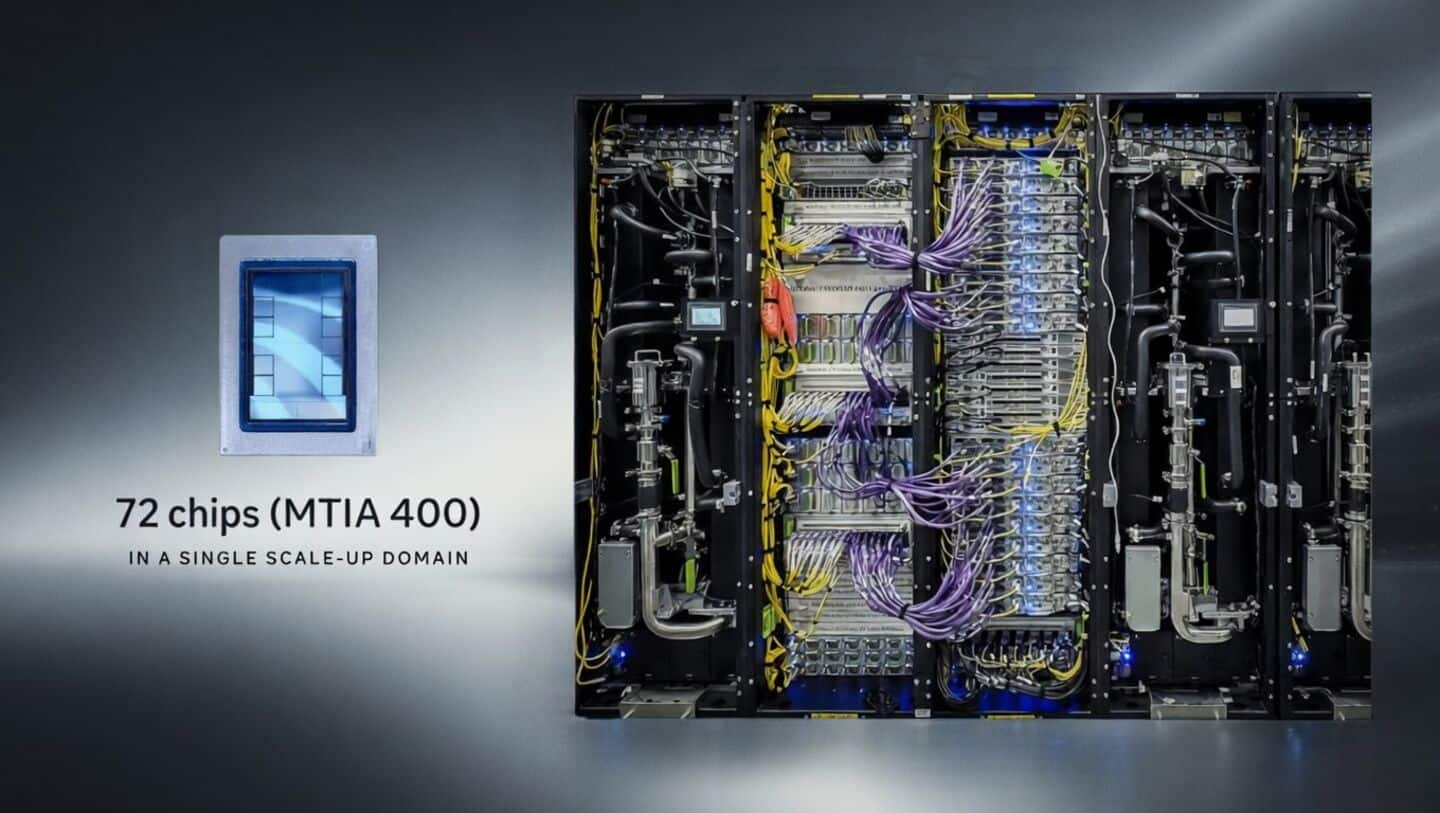

MTIA 400 chip set to go live soon

The MTIA 400 chip, designed to speed up AI inference tasks, has completed its testing phase and will be deployed in Meta data centers soon. A single rack in a Meta data center will house 72 of these chips. The other two chips in the series are expected to go live by 2027.

Expansion strategy

Meta's aggressive data center expansion to bolster AI capabilities

Meta's AI spending spree includes a massive data center in Louisiana and two others in Ohio and Indiana. The company is also looking to lease space at the Stargate site in Texas after OpenAI and Oracle scrapped plans to expand their AI data center there. This aggressive expansion strategy highlights Meta's commitment to bolstering its capabilities in the rapidly evolving field of artificial intelligence.

Distinct strategy

Supply chain challenges for Meta's ambitious silicon roadmap

Unlike other tech giants that use their AI chips as part of their cloud computing platforms, Meta's MTIA chips are used entirely for internal purposes. The upcoming MTIA chips will have more high-bandwidth memory (HBM) to power GenAI-related inference tasks. However, the tech industry's mega AI push has led to a shortage of memory chips in the broader market, which could pose future supply chain constraints for Meta's ambitious silicon roadmap.