Google turns the mouse cursor into an AI assistant

What's the story

Google DeepMind is experimenting with a new way to use the mouse pointer, as part of its Gemini project. The idea is to treat cursor position as a real-time signal for the AI model, instead of just tracking where the cursor is. This would allow Gemini to understand what users are pointing at, making interactions more intuitive and efficient.

User experience

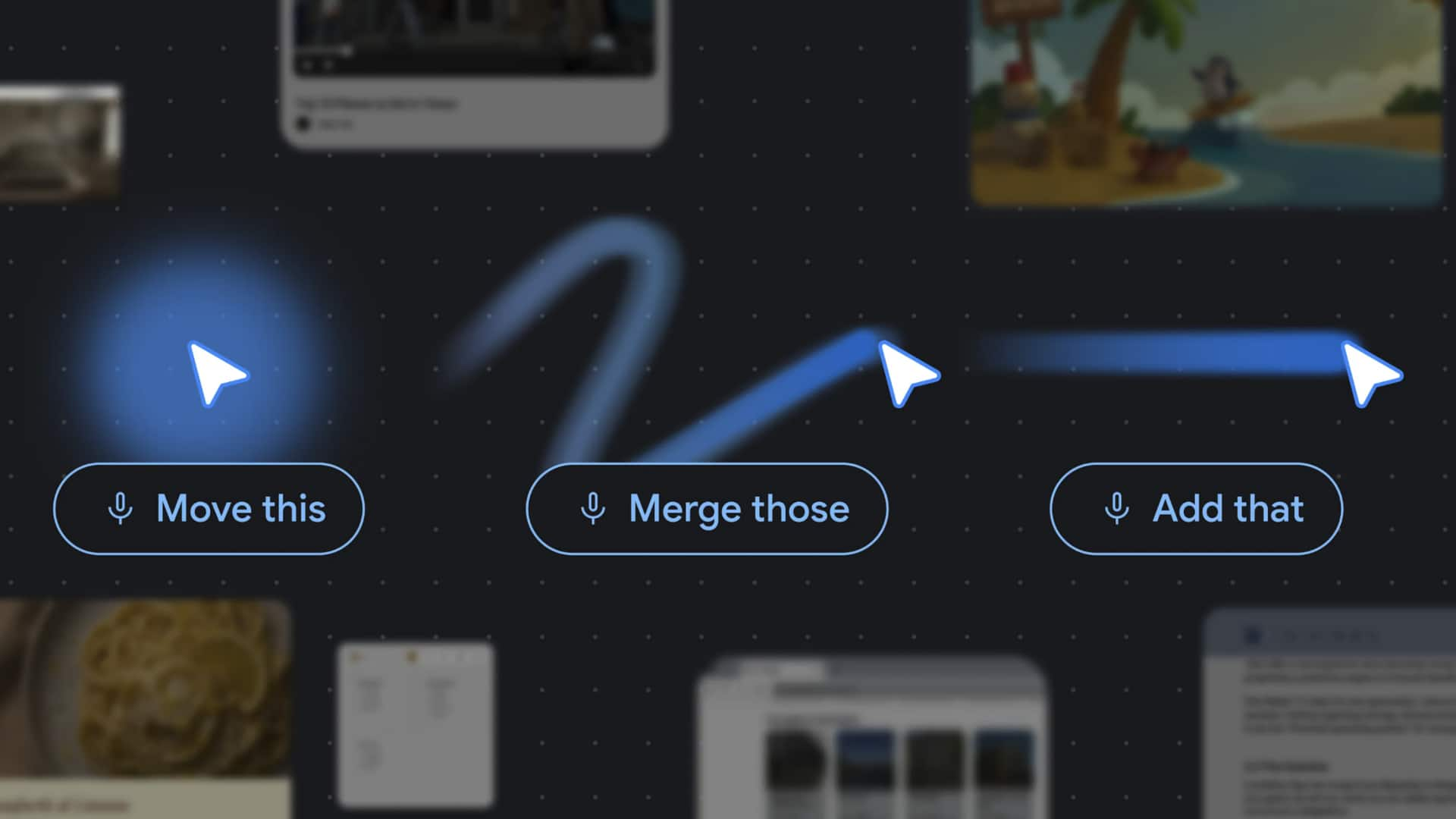

AI pointer simplifies interactions with LLMs

The AI pointer, as DeepMind calls it, aims to simplify user interactions with large language model (LLM)-based assistants. Instead of having to describe their needs in full sentences and manually copy or paste relevant content into a chat window, users can now use cursor position and hover state to provide context. For instance, hovering over a data table and saying "make a pie chart" gives the model enough context to act without a longer prompt.

Practical applications

Eliminating the need for precise instructions

The AI pointer can also be used for various tasks such as highlighting a recipe and saying "double these ingredients," pausing a video frame to generate a restaurant booking link, or converting a photo of a handwritten note into an interactive to-do list. This system is designed to eliminate the need for precise instructions, which DeepMind says is where the friction in AI-assisted work actually lies.

Developer implications

Implications for AI-native tools and developer assistants

The AI pointer also has implications for teams building AI-native tools or developer assistants. It bridges the gap between what a user sees and what the model sees, without putting the burden of context assembly on the user. If cursor position and screen region become first-class inputs alongside text and voice, it could significantly expand the design surface for AI-assisted workflows.

Future prospects

No product or release date announced yet

As of now, DeepMind has not announced a product, release date, or specific Gemini model version powering the pointer behavior. The demos are described as experimental and should be read as demo scenarios rather than confirmed shipping capabilities. The availability of AI Studio will be the most immediate signal for developers to understand the implementation surface of this capability.