Musk slams Anthropic as Claude-maker accuses Chinese firms of theft

What's the story

AI start-up Anthropic has accused three Chinese companies of conducting "industrial-scale" distillation attacks on its Claude AI model. The firms, DeepSeek, Moonshot AI, and MiniMax, allegedly created over 24,000 fake accounts and generated more than 16 million interactions with Claude to extract its capabilities for their own model improvements. Elon Musk has since lashed out at Anthropic over the matter.

Data theft claims

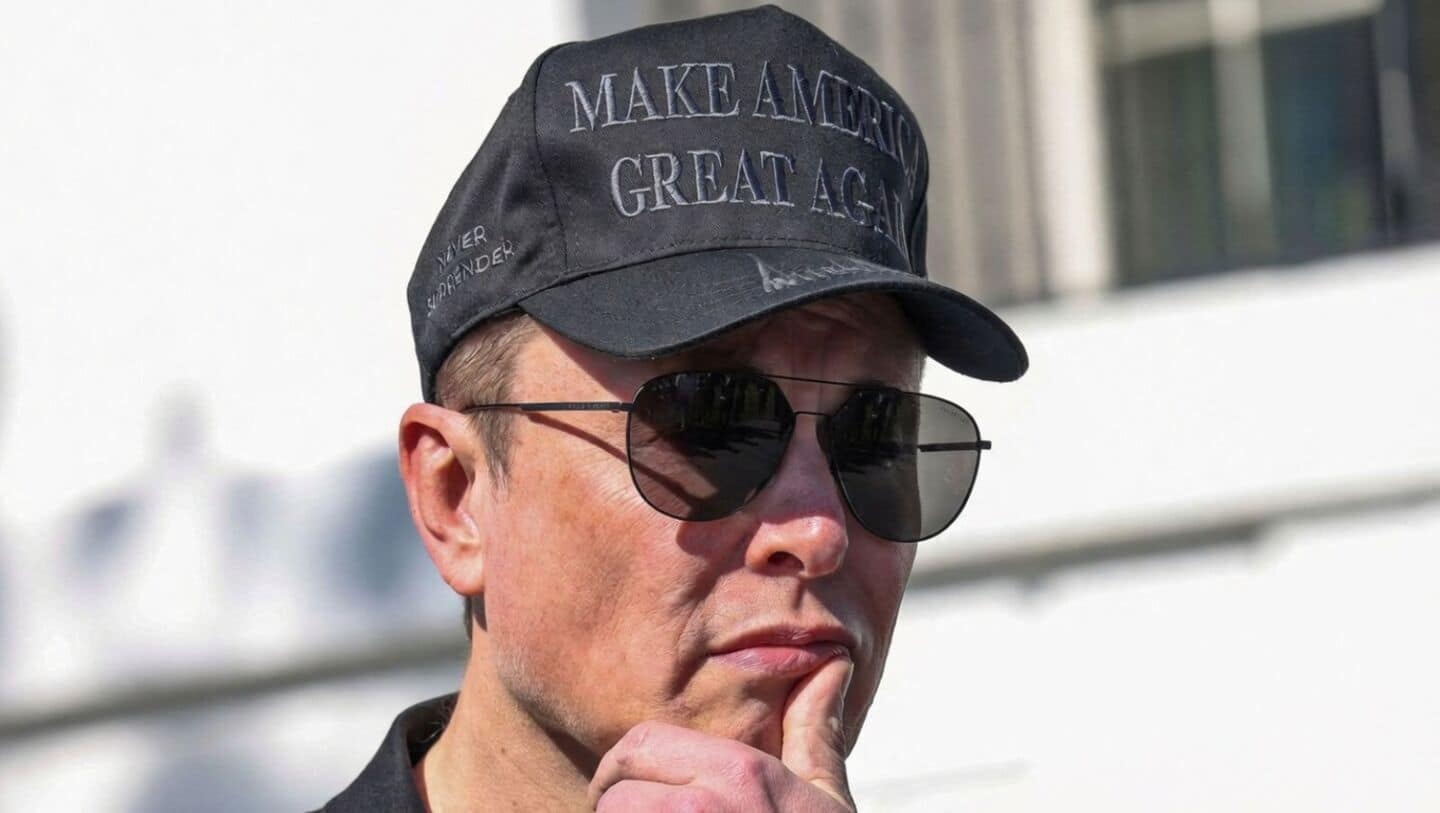

Musk accuses Anthropic of stealing training data

Responding to Anthropic's accusations, Musk claimed that the start-up itself is "guilty" of stealing training data on a massive scale. "Anthropic is guilty of stealing training data at massive scale and has had to pay multi-billion dollar settlements for their theft. This is just a fact," wrote Musk on X. He even shared screenshots from 'X Community Notes' to back his claim. Musk's criticism of Anthropic comes amid wider debates over AI copying and data ethics.

Company statement

Distillation attacks by Chinese labs confirmed by Anthropic

In a blog post, Anthropic confirmed the distillation attacks by DeepSeek, Moonshot AI, and MiniMax. The company said these labs used a technique called "distillation," where less capable models are trained on the outputs of stronger ones. While this is often used for legitimate purposes in AI development, Anthropic warned that it can also be exploited for illicit purposes by foreign labs seeking to extract advanced capabilities from American models.

Urgent response

National security risks and call for action

Anthropic warned that the threat of distillation attacks is growing in intensity and sophistication, with a narrowing window to counter them. The company called for rapid, coordinated action among industry players, policymakers, and the global AI community. It also flagged national security concerns over 'illicitly distilled models,' which could be used by state and non-state actors for malicious cyber activities without safeguards.