Apple's smart glasses could rely on hand gestures for apps

What's the story

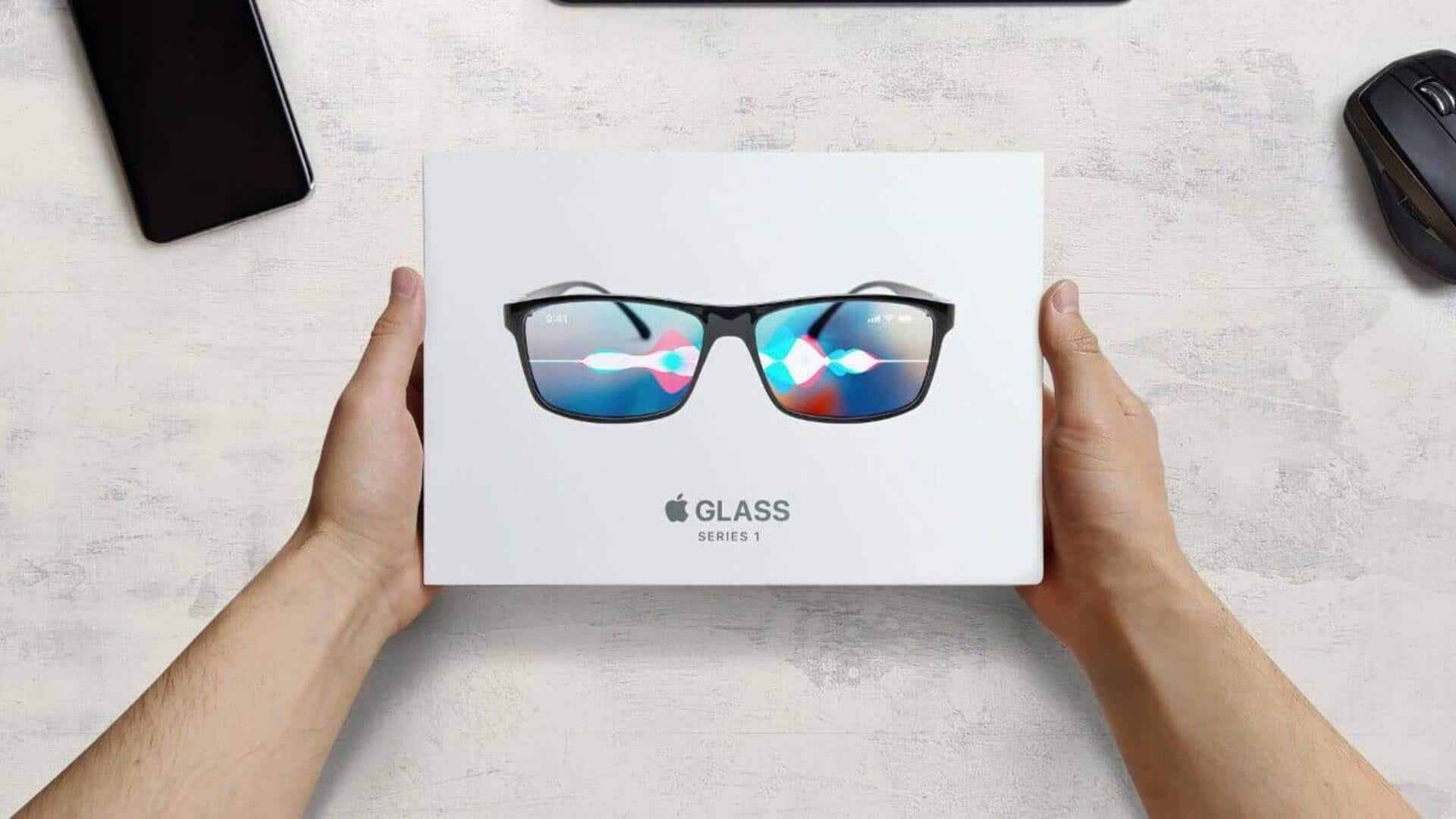

Apple is working on a pair of AI smart glasses to take on the likes of Meta Ray-Bans. The upcoming device will come with two cameras: a high-resolution one for taking photos and videos, and a lower-resolution wide-angle lens for reading hand gestures. The latter will also provide visual input for Siri, Apple's virtual assistant.

Gesture integration

Focus on gesture-based input

Apple has been focusing on gesture-based input for its devices, including the Vision Pro. There are also rumors of an update to AirPods Pro with low-resolution cameras and gesture support. The move makes sense as gestures are a natural way to interact without a screen. However, the first version of smart glasses will not have a display at all, unlike future models, which may come with one for augmented reality (AR) features.

Design considerations

Battery life constraints and material choice

Battery life is a major constraint for Apple's smart glasses as the company wants to keep them slim and lightweight. This has led to a stripped-down feature set, avoiding energy-intensive technologies like LiDAR, 3D cameras, or screens. The company is also testing different styles for the glasses with plans of using acetate, a lightweight, plant-based material more flexible than plastic.

AI capabilities

Competing against Meta's Ray-Bans

Apple's smart glasses will come with an advanced version of Siri, which is set to debut in iOS 27. The device will let users take photos, record videos, and make calls. They can also interact with Siri for information about their surroundings. The feature set is similar to that of Meta Ray-Bans, which Apple hopes to compete against.