Google reaffirms ethical AI commitment, in-house researchers differ

What's the story

Barely months after Google fired two leaders of its Artificial Intelligence (AI) ethics research wing, it has promised to double its research staff studying responsible AI. Additionally, Google CEO Sundar Pichai has pledged support and funding for more ethical AI projects. However, some members of the search giant's tight-knit ethical AI group said that the ground reality isn't what Google is publicly presenting.

Headless

Google ousted AI researchers for work critical of the company

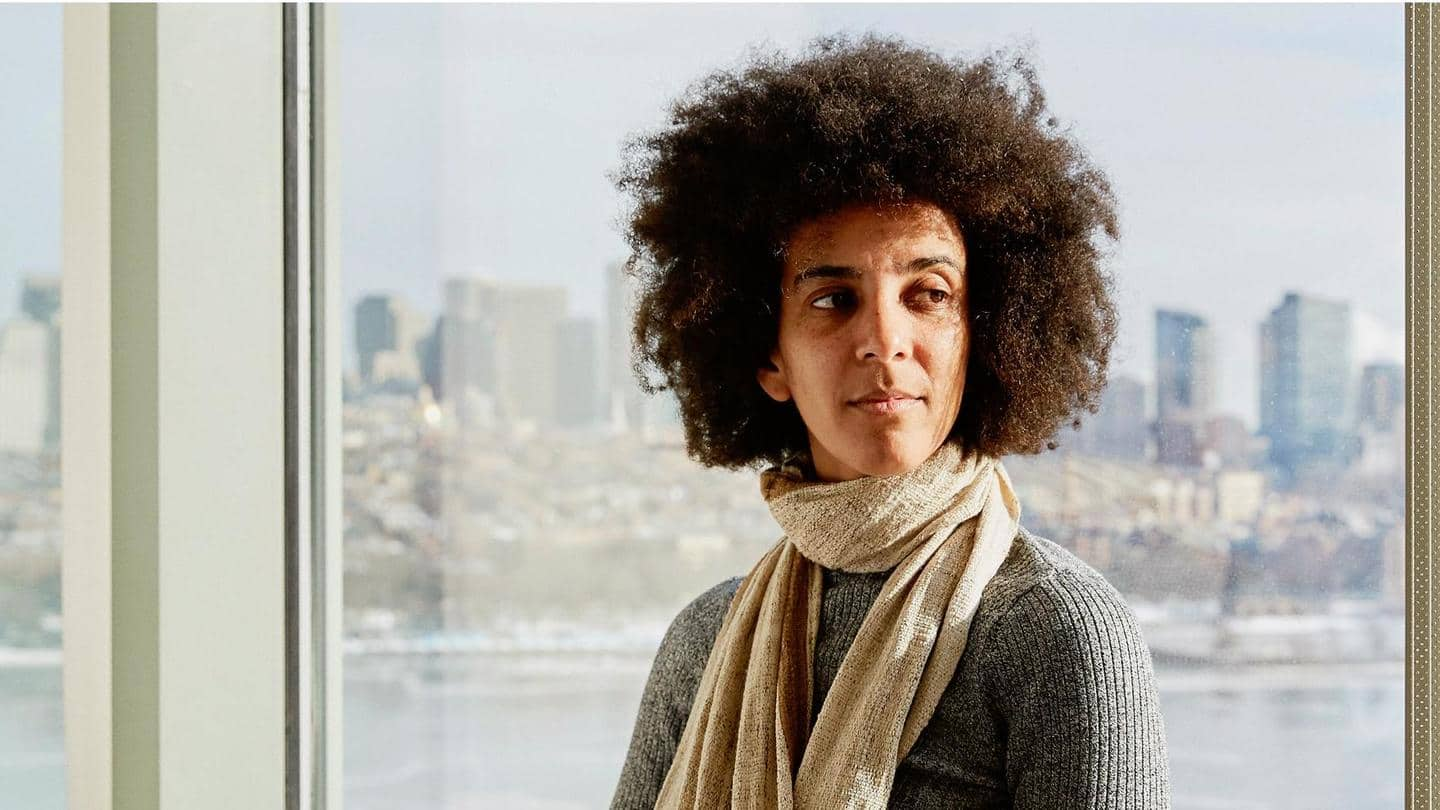

In February, we reported that Google had fired Margaret Mitchell, who founded the company's ethical AI research group. Shortly before firing Mitchell, the company had fired her colleague Timnit Gebru. Both researchers had authored research papers in the public domain (which were later censored) critical of Google's AI-powered facial recognition and language processing systems. They alleged these were biased and could hurt marginalized individuals.

Serious doubts

Google admits reputation took a hit due to Gebru's dismissal

Google initially hired scientists promising them research freedom, until their research became critical of Google itself. Meanwhile, Google's head of AI, Jeff Dean, admitted in May that the company took a "reputational hit" due to the controversy surrounding Gebru's work and dismissal. Vox reported that members of Google's AI research wing have serious doubts the company can recoup lost credibility in the academic community.

Bad vibes

Internally, researchers continue to seek guidance from ousted leaders

The 10-member team reportedly doubts that Google will even listen to the research group. Google is yet to hire replacements for Gebru and Mitchell. Some of the research group members convene in private messaging groups, manage themselves on an "ad-hoc basis," and seek guidance from their former bosses. These researchers are also deliberating leaving to work for other companies or to return to academia.

Any good?

Ethical AI research has no point if Google silences criticism

While Google leverages AI to solve important issues such as cancer diagnosis and earthquake detection, academics believe it is reluctant to heed the ethical AI group's advice in more profitable and questionable applications. The research group's future remains uncertain, given how Google seems to create it to maintain an illusion of checks and balances, but didn't expect it to actually do its job.